VS2017RC Tooling on Linux

What better way to try out VS2017 RC, by creating a .Net Core solution on windows, and building it on linux.

However the standard .Net core installation guide for Linux, as of the date of this post, will not build VS2017 RC projects, due to it utilising *.csproj files, and no longer creating xproj / project.json files.

Expect an error along the lines of:

[user@localhost]$ dotnet run The current project is not valid because of the following errors: /home/user/DotNetCoreASP(1,0): error DOTNET1017: Project file does not exist '/home/user/DotNetCoreASP/project.json'.

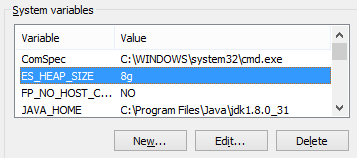

A version check will show that the "latest" is quite on the bleeding edge as it needs to be.

[user@localhost /opt/dotnet]$ dotnet --version 1.0.0-preview2-1-003177

Compare this to Powershell after a VS2017 .Net Core install in the windows box.

PS C:\> dotnet --version 1.0.0-preview3-004056

Initially, the only option looked to be building from the preview branch in git.

However, there is a series of preview binaries available for those looking to hit the ground running faster.

https://github.com/dotnet/core/blob/master/release-notes/preview3-download.md

Howover, that is waye tyopo easy.

Building the .Net CLI in Linux

Let's build us the .Net CLI.

After a git clone, switch to the preview 3 branch.

git checkout -b re1/1.0.0.0-preview3

Initally, it's going to take a while, the bash that kicks it all off is /build.sh

The scripts go three to four deep ,at least. Adding a set -x to the top level script next to the existing set -e will give us some visibility into how the build is going.

#!/usr/bin/env bash

#

# Copyright (c) .NET Foundation and contributors. All rights reserved.

# Licensed under the MIT license. See LICENSE file in the project root for full license information.

#

# Set OFFLINE environment variable to build offline

set -e

# Enable recursive debugging for verbose output

set -x

SOURCE="${BASH_SOURCE[0]}"

...

Post Build

Once built set up a new symlink. As it will be to the same executable, dotnet, create it in a subfolder to /user/local/bin, and then remove it, and remove the folder.

sudo mkdir /usr/local/bin/dotnet-preview3-dir sudo ln -s /home/user/dev/cli/artifacts/centos.7-x64/stage2/dotnet /usr/local/bin/dotnet-preview3-dir sudo mv /usr/local/bin/dotnet-preview3-dir/dotnet /usr/local/bin/dotnet-preview3 sudo rm /usr/local/bin/dotnet-preview3-dir -r

You can then have a stable binary, and a build version running side by side.

Troubleshooting

One nasty looking error I bumped into was this one, in red, making it extra ominous.

$ dotnet run /opt/dotnet/sdk/1.0.0-preview3-004056/Microsoft.Common.CurrentVersion.targets(1107,5): error MSB3644: The reference assemblies for framework ".NETFramework,Version=v4.0" were not found. To resolve this, install the SDK or Targeting Pack for this framework version or retarget your application to a version of the framework for which you have the SDK or Targeting Pack installed. Note that assemblies will be resolved from the Global Assembly Cache (GAC) and will be used in place of reference assemblies. Therefore your assembly may not be correctly targeted for the framework you intend. [/home/user/DotNetCore/hwapp/hwapp.csproj] The build failed. Please fix the build errors and run again.

Let see, its mention Net 4.0, and a GAC< on a LInux bo-x that is running .Net Core.

Not looking good. There are no .Net 4.0 References in the project and the whole point of .Net Core is to stand alone with a dependency on Mono. As for the GAC reference, this message doesn't give is much to go on.

Until you realise all you need to do is restore the project.

dotnet restore

It does show that while the base cross-platform functionality is there, removing the legacy windows reference is going to be some time away for this fledgeling framework.